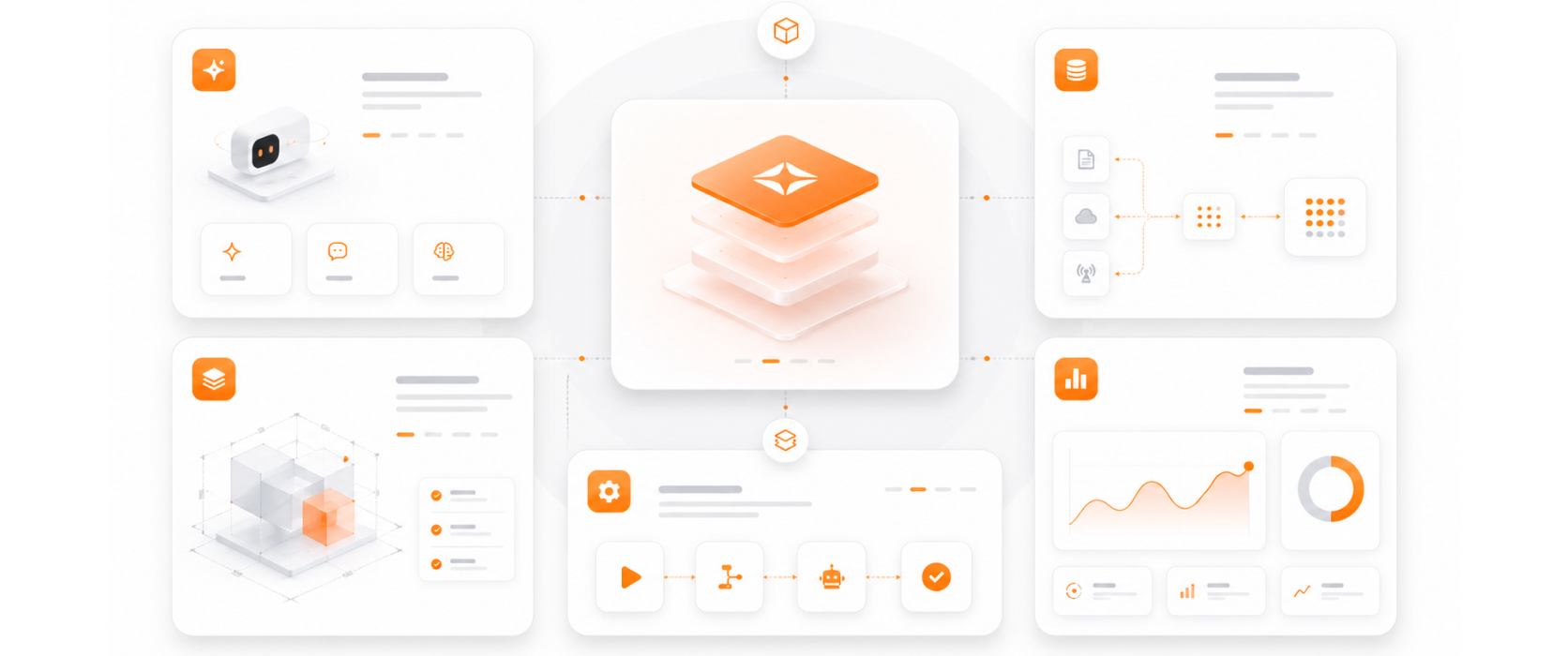

Very few QA practices cover both traditional application testing and AI-specific quality assurance. Ours does — because we deliver both digital platforms and AI agents, and both need to be tested rigorously before going into production.

Our AI QA framework was built from real production experience testing LLM systems — not adapted from traditional software testing. Accuracy against ground truth, hallucination detection, and behavioral permutation testing are core capabilities, not add-ons.

QA engineers are part of every delivery team from sprint one — writing test cases alongside feature development, not reviewing outputs after the fact.

Our QA capability is automation-first — test suites that run in CI/CD pipelines and flag issues before they reach production, not manual testers reviewing releases at the end.

We report on quality in concrete KPIs — test coverage percentage, defect escape rate, AI accuracy score, hallucination rate, and regression pass rate. Quality is visible, tracked, and improving with every release.