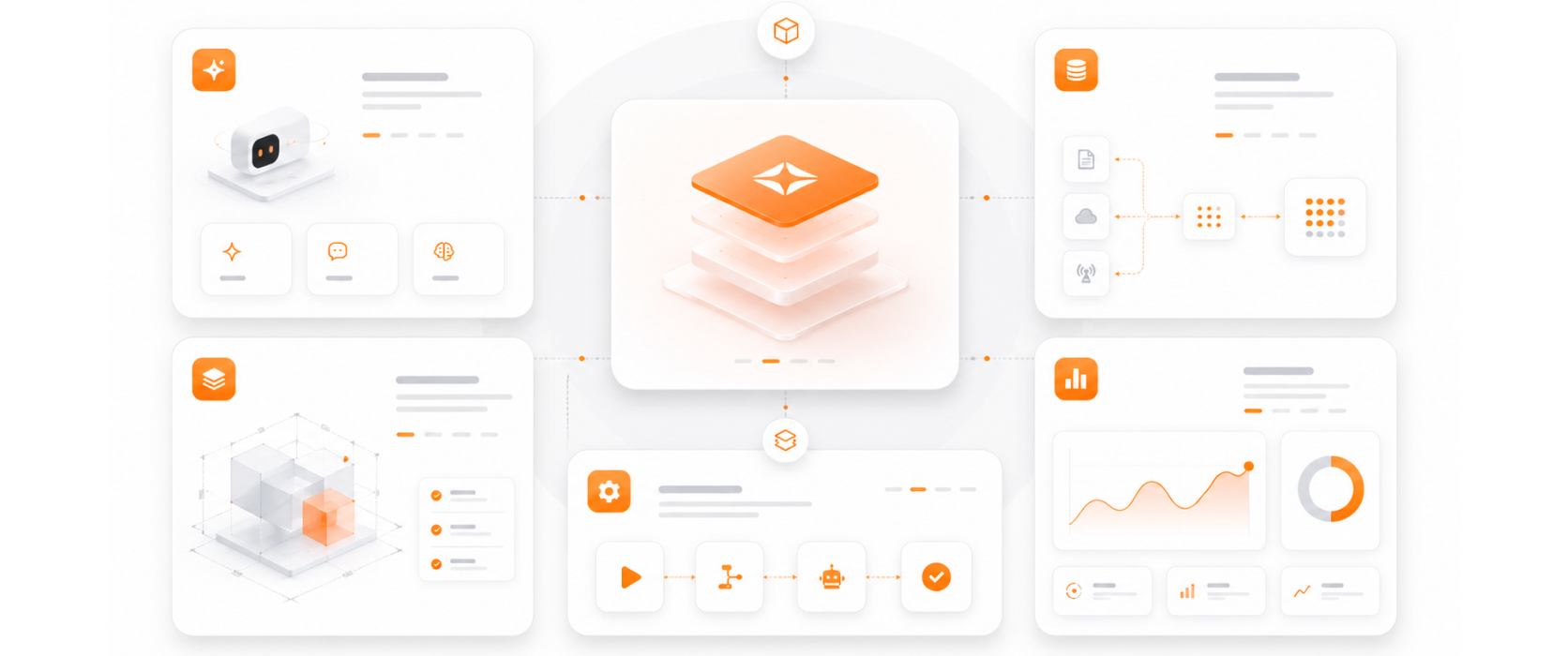

MLOps is embedded into every AI project we deliver — not an optional add-on. Every model we deploy has CI/CD, monitoring, and lifecycle management from day one.

Our drift detection frameworks are calibrated for LLM and agent workloads — not just classical ML models — covering prompt drift, output quality regression, and behavioral changes.

MLOps pipelines that produce full audit documentation — model versions, training data lineage, deployment history, and performance records — for regulated industry compliance.

Model performance monitoring includes inference cost tracking and optimization recommendations — keeping AI operational costs within budget as usage grows.

Every MLOps engagement includes documented operational runbooks — so your team can manage and maintain AI systems independently after handover.